AI Technology Justifies ‘Might Is Right’.

Google built its Gemini AI by scraping enormous amounts of text, images, and data from the internet without asking permission or paying creators. Its neural network weights are compressed encodings of training data. They allow efficient storage and retrieval of patterns from the source material. Now it is facing multiple copyright lawsuits of this act.

Google claims its AI Gemini is being targeted by something called model distillation attacks. In simple terms, this means someone repeatedly queries Gemini, records the answers, and then trains a cheaper AI to behave similarly. This attack is repeated sometimes tens or hundreds of thousands of times.

It’s like asking a top student every possible exam question and then recreating those answers in your own notes. Google frames this as intellectual property theft and says it violates its terms of service.

Hypocrisy

Google is suddenly very protective of “its IP,” even though it showed little concern for the IP of writers, artists, journalists, and websites whose material was used to train Gemini in the first place.

They believe they transformed public content into private, valuable product behavior. Whether that transformation is legally or ethically sufficient is the multi-billion dollar question. The distillation attack is seen as copying the product, not just the training data.

What is policy of Google when it comes to Youtube content? It does not permit more than 9 seconds or so, of extracted content from any source video. Even if it is for the sake of reference or explaining. Yet it has gumption to complain that its industrial scale expropriation of data from internet is a fair use.

The Fear

AI companies are spending tens of billions of dollars on infrastructure, yet the outputs of major models are becoming almost indistinguishable from one another. If smaller players can cheaply clone the behavior of expensive models, Google’s competitive advantage collapses.

It is claimed that DeepSeek, a Chinese startup that shocked Silicon Valley in early 2025 by releasing a powerful AI model that was far cheaper to run, had adopted a similar technology.

Companies are struggling to monetize AI through subscriptions, ads, and enterprise services. If distillation lets small players replicate elite models “for pennies on the dollar,” the entire business model is threatened.

Google is angry about being treated the same way it treated the rest of the internet, and that the moral outrage rings hollow.

Philosophy

Accumulation is a theft. It is a theft from society. Therefore rich is often obligated to a higher standard of duty towards the society especially the not so well off members of society.

The controversy isn’t really about the act of model distillation itself. It’s about the profound and blatant hypocrisy of a company that built its entire business model on taking without permission now complaining that others are doing the same thing to it. It highlights the fundamental lack of any philosophy at the heart of the generative AI boom.

The entire boom is built on a series of unexamined, contradictory actions rather than a coherent set of principles.

This lack of philosophy stems from a clash between two powerful, but incompatible, worldviews that the industry is exploiting without ever resolving:

First view is that the “Information Wants to Be Free” Ethos: This is the legacy of the early internet, Silicon Valley, and hacker culture. It views data, knowledge, and information as a commons that should be open and accessible to all. Scraping the web is simply the technical manifestation of this belief.

Second is that the Aggressive Corporate Capitalism: This worldview is all about protecting assets, building moats, creating scarcity, and generating maximum return on investment for shareholders. A proprietary AI model is the ultimate corporate asset.

AI companies want to have it both ways. They want the freedom and lack of friction of the “information wants to be free” ethos when they are the ones taking data. But they demand the full protection of corporate capitalism when they are the ones who have the data (in the form of a trained model). They’ve built their business on the gap between these two philosophies without ever bridging it.

It is a system operating on nothing but momentum and self-preservation.

Explanation

There is no explanation from any AI to the larger philosophical questions raised above. Yet when I ran this article through an AI whose creator had settled a million dollar copyright suit with authors. It too accused me for not suggesting a solution. It was like blaming the house owner for not settling the arsonist before it set the house to fire.

Did AI at any time invited any views from public? Did they invite any discussion? No. The went ahead and appropriated whatever they could lay their hands to.

An Example

A famous chef who built his career by traveling the world, secretly taking photos of every dish he saw in other people’s restaurants. Later he recreated it in their own kitchen. They never paid the original chefs or asked for permission.

Now, chef opens their own restaurant, and when a food blogger tries to reverse-engineer their signature dish by ordering it and figuring out the ingredients, the chef screams,

“That’s intellectual property theft! You’re stealing my work!”

Scale of Theft

A journalist reads 100 articles to learn how to write. A programmer studies 10,000 lines of open-source code on GitHub. This is considered fair use, apprenticeship, or simply “culture.”

An AI ingests billions of articles and terabytes of code in a matter of weeks. It doesn’t “learn” in the human sense; it statistically models the entire corpus.

The scale changes the nature of the act. Is it still “learning” when you can absorb the entirety of human publishing in a single training run? The law (like fair use) was never designed with this kind of industrial-scale data processing in mind. The automation removes the friction that traditionally defined acceptable use.

Yet the AI is concerned the same scale of distillation applied to its AI model.

Welcome to the jungle you created in the first place.

Public Data Turned Private

Data on the public web is seen as a free, open resource to be mined without consent or compensation. The philosophy here is one of extraction and opportunism, justified by the “public” nature of the data and the transformative purpose of the technology.

Once that data is processed and an AI model is built, that model is treated as a proprietary, defensible asset, a multi-billion-dollar piece of intellectual property. The philosophy here is one of absolute ownership and control. This is called as the “Transformation Argument”.

The Transformation Argument

AI companies claim their models represent “transformative use” of training data. They argue that converting billions of documents into neural network weights creates something legally distinct from the source material.

This is false.

Technically Fraudulent

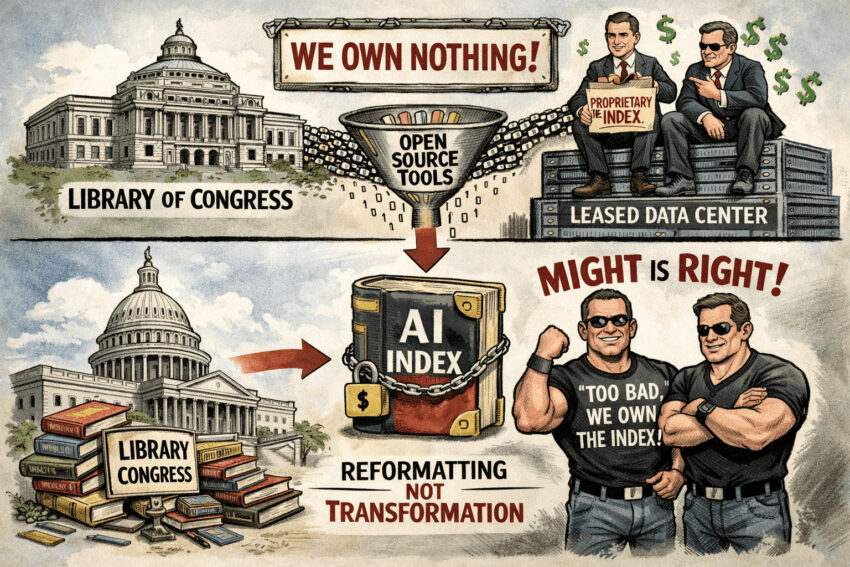

Imagine, You have 6GB of data on your drive. Using open source tools like GPT4All and an embedding model, you compress it into a 500MB index file in GGUF format (an open source format created for llama.cpp).

Question: Does creating this compressed index make you the legal owner of a new intellectual property, independent of the original 6GB? Answer: Obviously not.

The index is just an efficient retrieval and query mechanism. It encodes the semantic content of the original material in a different storage format. Compression and reformatting do not grant ownership.

If the source 6GB contained copyrighted material you had no rights to, the 500MB index inherits that same legal problem. You cannot claim the index as yours simply because you changed the file format.

AI Models Work Identically

AI companies do exactly the same thing at industrial scale:

- Ingest petabytes of copyrighted content without permission

- Process it through training algorithms (many of which are open source)

- Store the compressed representation as model weights

- Claim the weights are proprietary intellectual property

The technical process is identical to your 6GB example. The only differences are scale and the use of corporate legal language to obscure what actually happened.

Open Source Tools

For this Grand Theft of data, the entire technical stack is publicly available:

- PyTorch and TensorFlow (open source frameworks)

- FAISS and similar vector databases (open source)

- GGUF and other model formats (open source)

- Embedding and compression techniques (published research)

Conclusion

AI companies used open source tools to compress data they never licensed. Then they claim ownership of the compressed output. They own nothing. Not even data centers where processing is taking place as these are on lease.

This would be like downloading the Library of Congress, creating a searchable index using open source software, and claiming the index as your proprietary asset independent of the source material.

This is reformatting, not transformation. And that is the legal defence for AI industry if it foregoes claim of copyright. It can get a free pass in all copyright infringement suits if it admits that it owns nothing except an new index of the internet.

This is a new way of saying ‘Might Is Right.’

Reference:

https://futurism.com/future-society/google-copying-ai-permission

1 thought on “Grand Theft of Data by AI Systems”